Building SaaS Features Faster with AI-Driven Signal APIs

Turn real-time data into automated workflows with AI-driven signal APIs to cut development cycles, improve accuracy, and speed SaaS feature releases.

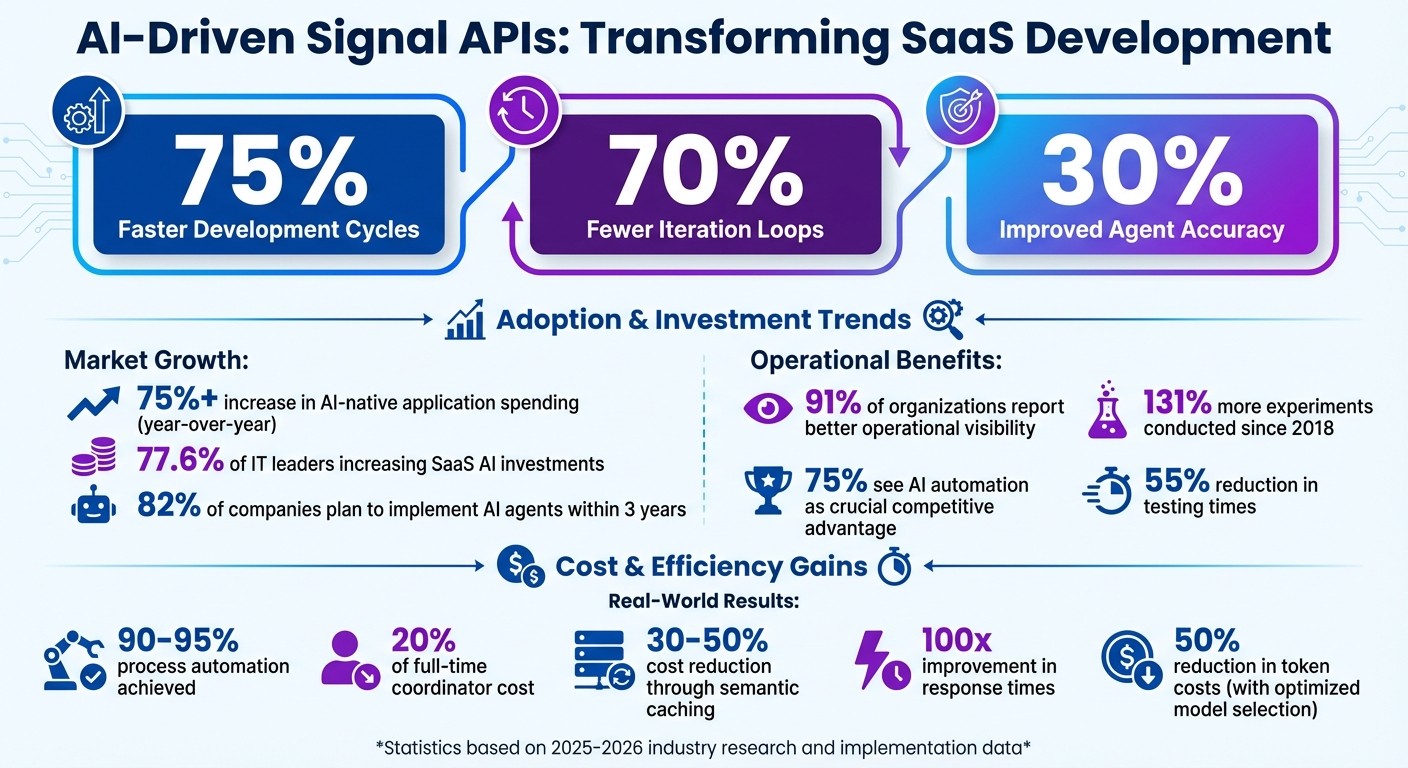

AI-driven signal APIs are transforming SaaS development by drastically reducing feature rollout times and simplifying complex workflows. These APIs process real-time data with large language models (LLMs) to automate decisions, manage tasks, and even escalate issues to humans when needed. Companies report up to 75% faster development cycles and 70% fewer iteration loops using these tools.

Key Takeaways:

What They Do: Turn raw data (e.g., CRM updates, support tickets) into actionable insights using AI, bypassing rigid rule-based systems.

How They Work: Use components like reasoning models, external APIs, and task handoff mechanisms to execute multi-step tasks.

Why They Matter: Help SaaS teams deliver features faster, improve data accuracy, and automate workflows, saving time and resources.

AI-Driven Signal APIs Impact on SaaS Development: Key Statistics and Benefits

Build a SAAS using AI with Perplexity's API

Key Benefits of Using AI-Driven Signal APIs in SaaS

AI-driven signal APIs bring measurable advantages that improve development speed and operational efficiency. They streamline automation, enhance data accuracy, and accelerate the release of new features.

Improved Automation and Workflow Efficiency

Signal APIs act as a seamless execution layer, moving tasks across systems without requiring constant human input. Developers can simply outline tasks in natural language, and the API handles the rest, significantly reducing development time.

Research shows that 91% of organizations experience better operational visibility after adopting AI-driven automation, and 75% see it as a crucial competitive advantage. These APIs follow a structured process: gathering data, analyzing it with machine learning, automating actions, and refining their performance based on feedback.

For example, a SaaS company used an AI-powered collections solution to overhaul its revenue management. Before implementation, they dealt with 200 duplicate invoices and lacked a formal collections strategy. Within a month, 90–95% of the process was automated, achieving the productivity of a full-time coordinator at just 20% of the cost. This allowed the sales team to focus on strategic initiatives. As Brian Dinh from Gather explained:

"All from a single Slack thread - no new logins, no extra dashboards".

This kind of automation extends to tasks like resolving support tickets or closing payment gaps, enabling teams to make faster, more accurate decisions.

Enhanced Data Accuracy and Smarter Decisions

Unlike traditional rule-based systems, which often fail in ambiguous situations, AI-driven signal APIs excel at interpreting subtle contextual cues. They can identify issues that predefined rules might miss, improving agent accuracy by around 30% when systematic evaluations are applied.

These APIs pull real-time data from CRMs, ERPs, marketing tools, and social platforms, applying filters and safety checks to ensure decisions are accurate. Some organizations even use AI agents during deployment to add safeguards like feature gates and error logging automatically. This ensures safer rollouts, even for teams without extensive system expertise.

By capturing real-time behaviors and errors, these systems enable timely interventions, reducing risks and speeding up development cycles.

Accelerated Feature Deployment

In the fast-paced SaaS industry, speed is everything. With 72% of enterprise buyers noting similar features across SaaS products and B2B deals taking nearly four weeks longer to close, rapid feature deployment can make a big difference.

Signal APIs help by offering pre-built intelligence, eliminating the need to develop complex models like natural language processing or predictive analytics from scratch. This integration allows companies to conduct 131% more experiments since 2018 and cut testing times by 55%, enabling faster updates and releases.

The urgency to adopt AI is reflected in market trends. Spending on AI-native applications has jumped by over 75% in just one year, and 77.6% of IT leaders report increasing investments in SaaS platforms with AI capabilities. Additionally, 82% of companies plan to implement AI agents within the next three years to stay competitive.

As Vedant Sharma aptly stated:

"The best AI SaaS tools in 2026 don't promise intelligence. They deliver work completed, errors reduced, and time returned".

How to Choose the Right AI-Driven Signal APIs for Your SaaS Platform

Choosing the best signal API means matching it to your platform's specific needs, future goals, and security requirements. A poor choice can lead to slower development or compliance issues down the line. Here's a breakdown of what to consider for seamless integration.

Evaluating Core Features and Integrations

The first step is understanding what your platform requires. If your tasks involve complex operations like code generation or managing multiple tools, advanced reasoning models are a must. For simpler, speed-focused tasks, non-reasoning models are a better fit.

Look for APIs that include native tools to minimize custom development. Features like web search, file retrieval for retrieval-augmented generation (RAG), and code interpretation should be built in. For instance, OpenAI's GPT-4o search preview hits 90% accuracy on factual queries, while its mini version achieves 88%.

State management is another key feature for handling multi-turn interactions. APIs like the Responses API automatically manage conversation histories, which is critical for complex workflows.

Also, check for support for structured JSON outputs and orchestration capabilities to simplify task delegation. To evaluate performance, start by prototyping with the flagship model. Once you're satisfied with accuracy, you can switch to smaller models to optimize for cost and speed.

Planning for Scalability and Future Growth

Scalability is just as important as core features. Multi-agent orchestration allows workflows to be distributed across specialized agents, speeding up execution.

Consider the context length supported by the API. For example, GPT-5.2 offers a 400,000-token capacity, ideal for managing large-scale conversations or data-heavy tasks.

Use modular SDKs to build flexible workflows. Open-source frameworks like the Agents SDK let you add tools or agents without reworking the entire system, giving you room to grow as your platform evolves.

Administrative tools are another must-have. APIs should provide detailed usage tracking, billing alerts, and role-based access controls (RBAC) to help you manage costs and maintain security at scale. Set up usage alerts early to monitor for overages or unusual activity that might point to security issues.

Checking Data Security and Compliance

Security should be a top priority alongside functionality. Start by verifying compliance certifications. Make sure the provider holds SOC 2 Type 2 certification and offers BAAs for HIPAA compliance if your platform operates in healthcare.

Pay close attention to data usage policies. The provider should have a "no training on your data" policy to ensure your proprietary information isn't used to improve their models. Look for zero data retention policies and data residency controls to meet regional laws on sovereignty.

As of January 2026, 82% of organizations plan to integrate generative AI into their data security strategies, up from 64% the year before. This shift highlights the growing need to prioritize AI security from the start.

Check encryption standards, such as AES-256 for data stored and TLS 1.2+ for data in transit. Advanced network controls like mTLS and IP allowlisting add another layer of protection. For access management, enterprise-grade options like SSO, MFA, and RBAC are essential.

Built-in guardrails are also important. Features like PII filters, safety classifiers, and relevance classifiers can prevent data leaks or inappropriate outputs. Assign risk levels to API tools - read-only tools are low risk, while those with write access to sensitive systems should require human oversight.

Finally, avoid hard-coding API keys. Instead, use environment variables or secret management tools. Integrated tracing tools can help you monitor agent workflows and spot policy violations in real-time.

Best Practices for Implementing AI-Driven Signal APIs

To speed up SaaS feature development with AI-driven signal APIs, it's essential to focus on governance, performance tracking, and treating AI outputs as recommendations rather than absolute truths.

Planning the Integration Process

Before diving into coding, set clear success metrics like latency, accuracy, and cost-to-value. These benchmarks help you measure the success of your integration efforts.

When selecting models, match their capabilities to the task at hand. Use reasoning models for complex tasks and faster, more cost-efficient models for simpler ones. This approach can reduce token costs by up to 50%.

For workflows, choose an integration approach that fits the complexity. Single-agent systems are ideal for straightforward tasks, while multi-agent systems (using Manager or Decentralized patterns) are better suited for intricate workflows requiring division of responsibilities. From the start, implement multi-layered guardrails - such as relevance filters, safety protocols, PII detection, and moderation tools - to mitigate risks like data privacy breaches or reputational harm.

Centralizing key features like authentication, rate limiting, and schema validation through an API gateway is another critical step. This setup adds a protective layer between your platform and the unpredictable nature of AI systems, reducing the risk of cascading failures.

Once the integration plan is solid, the focus shifts to ongoing performance monitoring and refinement.

Monitoring and Improving API Performance

After implementation, continuous performance tracking becomes essential. Go beyond traditional metrics like latency and error rates. Monitor prompt traits, token usage, and business outcomes to get a full picture of your API’s impact. Since AI models can produce different outputs for the same input, testing requires a unique approach.

Develop a "golden set" of representative prompts with expected outcomes. Use this set to test new model versions against your baseline and detect quality shifts early. For example, in March 2025, Hebbia integrated OpenAI's web search tool for asset managers and law firms. This real-time search feature delivered tailored market insights that surpassed earlier benchmarks.

Introduce semantic caching with vector embeddings to recognize similar queries and return cached responses for differently phrased questions. This method can slash costs by 30% to 50% and improve response times by up to 100x. Set up financial alerts in your API dashboard to detect unusual usage spikes before they escalate.

"The companies that avoid these disasters spend less time coding and more time measuring. They obsess over latency budgets and failure modes before they write their first API call." - Molisha Shah, GTM and Customer Champion, Augment Code

Start by using your most capable model to establish a performance baseline. Once you’ve set that standard, experiment with smaller models to reduce costs and latency without compromising accuracy. For large documents, manage context windows with rolling windows or semantic chunking to prevent token overflow issues.

Training Teams to Use Signal APIs

Your team needs to understand that AI APIs are probabilistic systems, not traditional REST endpoints. Train developers to approach prompt writing as if they were drafting product specifications. This means defining roles, goals, guardrails, and success metrics instead of relying on prose.

Build evaluation datasets with at least 30 test cases per agent. These datasets should cover success scenarios, edge cases, and potential failure modes to catch issues before production. When designing external capabilities, treat them as tools with strict input/output contracts. For high-risk or irreversible actions, establish clear human-in-the-loop escalation protocols.

"It's not the prompts that break. It's everything around them. Error handling, context management, tool contracts, traceability." - Alina Capota, Senior Manager, Forward Deployed Engineering, UiPath

Convert your standard operating procedures (SOPs) and support scripts into routines that large language models (LLMs) can follow. This ensures the API adheres to your business logic. Use trace logs to identify inefficiencies in your system. Avoid simple retry mechanisms - since AI outputs are variable, retries rarely lead to better results.

Conclusion: Using Signal APIs to Build SaaS Features Faster

AI-driven signal APIs are changing the game for how SaaS teams approach feature development. Metrics show that these tools can significantly cut down development time and iteration cycles. What used to take months can now be accomplished in hours, allowing teams to move from concept to production in just two sprints instead of dragging it out over two quarters.

Take unified APIs like Responses API, for example. These APIs simplify development by combining chat, tool usage, and reasoning into a single call. They’re powered by features like native web search (with 90% factual accuracy) and integrated tool functions, which reduce vendor complexity and make integration smoother. One organization, for instance, used these APIs to automate application processing in just days - a task that traditional RPA systems took months to handle.

But technology alone isn’t enough. Teams also need to focus on process discipline. Establishing performance baselines, adding layered guardrails, and running systematic evaluations are essential to maintaining quality. Techniques like rigorous testing and smart caching can further improve accuracy while keeping costs in check.

To build on these efficiency gains, SaaS teams should adopt sustainable practices. Competitive advantage lies in actively monitoring probabilistic AI APIs rather than setting them up and walking away. By versioning prompts through dashboards, adding risk-based safeguards, and planning for human-in-the-loop escalation, platforms can roll out features with confidence and control.

As the industry moves toward autonomous agents that work 24/7 across multiple channels, the real challenge isn’t deciding whether to use AI-driven signal APIs - it’s how quickly you can integrate them. This speed not only accelerates feature deployment but also strengthens your SaaS strategy, helping you keep up with customer demands and drive long-term success.

FAQs

How can AI-driven signal APIs help SaaS companies build features faster?

AI-driven signal APIs give SaaS companies a powerful way to accelerate feature development by providing real-time insights that improve automation and simplify workflows. These APIs let teams integrate dynamic data signals quickly, allowing for responsive and flexible features without requiring heavy manual coding. The result? Shorter development timelines and greater efficiency.

By constantly analyzing live data, signal APIs also enhance data accuracy and system dependability, cutting down on errors and reducing the need for manual fixes. This leads to faster iteration cycles and quicker rollout of new features, helping SaaS platforms adapt to users' changing needs with ease. Companies leveraging these APIs can save valuable time while delivering smarter, more responsive products.

What security measures should I consider when using AI-driven signal APIs?

When working with AI-driven signal APIs, security must be a top priority to safeguard sensitive information, maintain system reliability, and earn user confidence. Start with securing the data pipeline - this ensures that data remains protected from unauthorized access or tampering during sourcing and ingestion.

Beyond that, make sure both the data and AI models are stored in a secure environment. This helps prevent breaches or any potential manipulation. The entire AI development process - from training and evaluation to deployment - should also be protected to minimize risks like model bias or adversarial attacks.

To bolster security, implement strong authentication, authorization, and encryption protocols. These measures help control API access and reduce the chances of data leaks. Additionally, safety checks are vital to ensure the system behaves as expected, especially when integrating multiple signals or tools.

In short, a thorough, end-to-end security approach is essential to safely harness the power of AI signal APIs.

How can teams ensure AI-driven signal APIs are accurate and reliable in their workflows?

To maintain the accuracy and dependability of AI-driven signal APIs, certain best practices are essential. First, focus on validating data sources. Ensure that the information feeding into the APIs is consistent, high-quality, and relevant. This step minimizes errors and strengthens trust in the API's results.

Another critical step is regular monitoring and testing. Implement automated checks to quickly identify anomalies or any unexpected behavior. This allows teams to address potential issues before they escalate.

Lastly, don't overlook the importance of human oversight. By incorporating feedback loops and human review, you can fine-tune the API’s performance over time. This ensures it continues to deliver accurate and contextually relevant outputs.

By combining these strategies - data validation, continuous testing, and human input - you can confidently integrate AI-driven signal APIs into your workflows while maintaining their reliability.

Related Blog Posts

Supercharge your marketing results with LeadBoxer!

Analyze campaigns and traffic, segement by industry, drilldown on company size and filter by location. See your Top pages, top accounts, and many other metrics.

LeadBoxer

Get started