From Raw Events to Actionable Signals Using a Single API Layer

A single API layer turns anonymous event streams into immediate, actionable sales signals—cutting lead qualification from months to milliseconds.

Turning raw data into actionable insights is critical for B2B success, but it’s often time-consuming and inefficient. A single API layer solves this by automating data collection, enrichment, and lead qualification, enabling sales teams to act on opportunities in milliseconds. Here's why this matters:

Speed: Manual data processing delays lead response times. An API processes and routes leads in just 50 milliseconds.

Efficiency: It eliminates the need for custom pipelines, reducing setup time from months to days.

Automation: Automatically scores and qualifies leads based on behavior, firmographics, and technographics.

Scalability: Handles millions of events monthly with real-time processing, ensuring consistent performance as your business grows.

Integration: Syncs seamlessly with CRMs and marketing tools, keeping data consistent and actionable.

Event Data and Its Role in B2B Lead Generation

What Is Raw Event Data?

Raw event data refers to the unprocessed digital footprints left behind during online interactions. This includes everything from website visits and button clicks to email opens, video plays, and chat engagements. Each action generates a data point containing details like IP addresses, timestamps, session duration, URLs visited, and device information.

For instance, imagine a prospect visiting your pricing page. Raw event data might log their IP address (e.g., 203.0.113.45), the exact time of the visit, the page they viewed, how long they stayed, and the actions they took next. While this data is rich in detail, turning it into actionable insights is no small feat.

The Problems with Manual Data Processing

Processing raw event data manually is a time-consuming and inefficient task. Many B2B companies gather massive amounts of event data but struggle to extract useful insights. The process often involves exporting data from various platforms, matching IP addresses to company names, cross-referencing CRM records, and manually scoring leads based on behavior. This can take hours - or even days.

"Building your own tracking and enrichment pipeline takes months. Maintaining it takes years."

LeadBoxer Platform

Even after investing in such a pipeline, ongoing maintenance demands dedicated backend and data engineers. By the time the processed data is ready, the prospect may have already moved on, and your window of opportunity could be lost.

Another challenge is the gap between data collection and actionable insights. For example, an analytics dashboard might report 500 website visitors in a week. But without knowing which companies those visitors represent or whether they align with your target audience, the data offers little value for generating leads.

Why Actionable Signals Matter for Lead Generation

To overcome these challenges, raw event data must be transformed into actionable signals. These signals enrich raw data, turning it into intelligence that can directly impact revenue. Instead of just recording an IP address visiting a page, actionable signals identify the company behind the visit, its industry, estimated revenue, and even a lead score. This enriched information allows for immediate, targeted follow-ups.

Feature | Raw Event Data | Processed & Qualified Insights |

|---|---|---|

Content | IP addresses, timestamps, URLs | Company names, industry, revenue, lead scores |

Utility | Difficult to interpret without further processing | Ready for dashboards, CRM systems, and sales outreach |

Context | Fragmented and anonymous | Enriched, identified, and aligned with ideal customer profiles |

Actionability | Low; requires extensive manual work | High; supports automated workflows and targeted outreach |

With actionable insights, sales and marketing teams can focus their efforts where it matters most. For example, if enriched data reveals that a prospect from a high-priority industry has engaged with key content, you can immediately trigger personalized follow-ups, assign the lead to the right team member, and prioritize outreach based on their intent to buy. This approach eliminates guesswork, streamlines processes, and allows you to scale lead generation effectively.

How a Single API Layer Processes Event Data

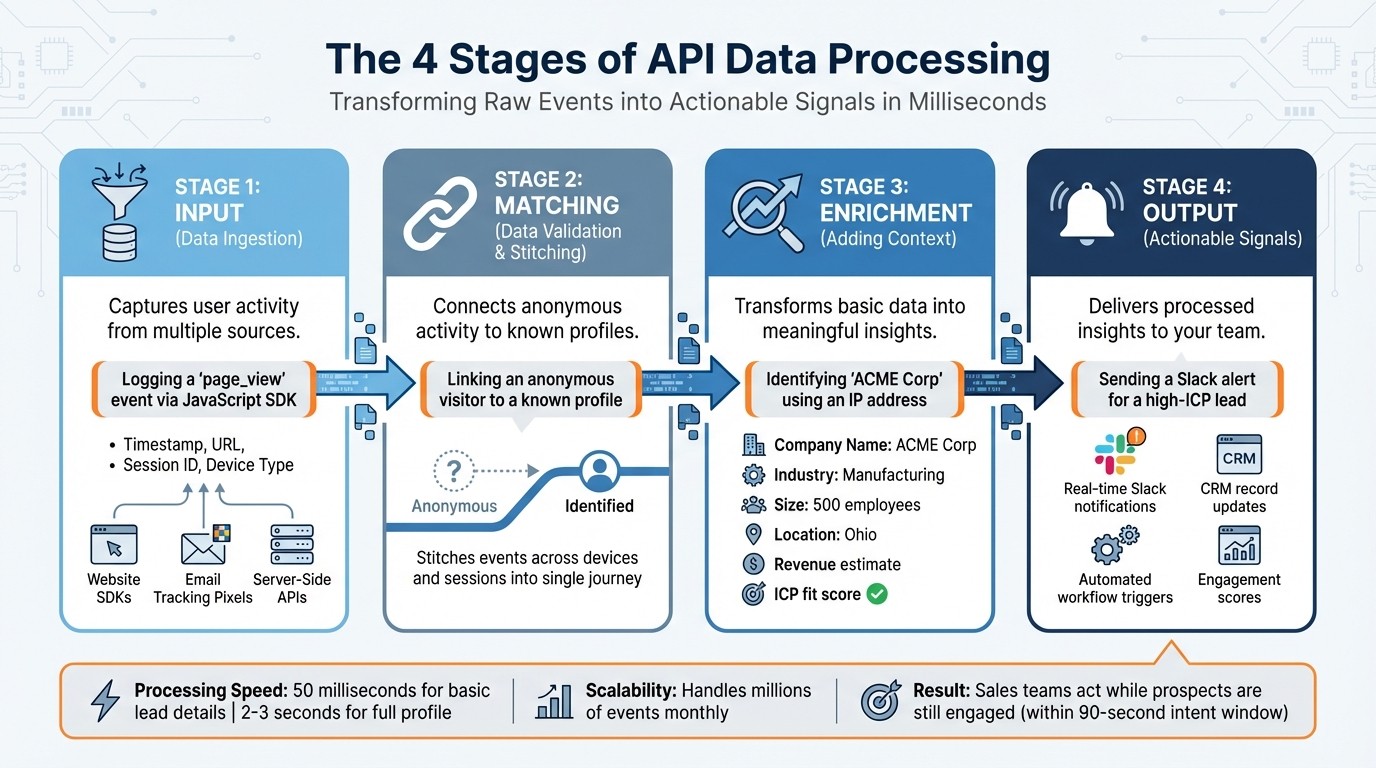

4 Stages of API Data Processing: From Raw Events to Actionable Signals

A single API layer streamlines lead generation by combining tracking, enrichment, and routing into one centralized system. Instead of piecing together separate pipelines - a process that can take 6 to 12 months and demands significant backend and data engineering resources - this approach simplifies everything. The result? Your team can deploy functional solutions in days, not months, freeing them to focus on product development rather than maintaining infrastructure.

Raw event data from sources like website SDKs, email tracking pixels, and server-side APIs is processed in four stages, turning anonymous user activity into actionable insights. This transformation enables your sales team to act quickly, often within milliseconds. Speed matters because buyer intent signals tend to fade within just 90 seconds. Manual workflows simply can't match this level of responsiveness.

The system also scales effortlessly. Load-balanced and geo-distributed environments handle massive data volumes asynchronously, adapting as your business grows from thousands to millions of events per month. This eliminates the ongoing maintenance that custom-built solutions require.

The 4 Stages of API Data Processing

Every event processed by the API layer passes through four stages, each adding more context and intelligence to the raw data.

Input (Data Ingestion): This stage captures user activity from various sources. For example, when someone visits your pricing page, the JavaScript SDK logs a "page_view" event, recording details like timestamp, URL, session ID, and device type. Email tracking pixels log opens and clicks, while server-side APIs capture interactions like form submissions. All these data streams converge into a unified system, creating a comprehensive record of user behavior.

Matching (Data Validation & Stitching): Here, the system connects anonymous activity to known profiles. For instance, if a visitor browses your site anonymously and later fills out a demo request form, the API stitches these events together into a B2B customer journey. This process ensures a complete view of each prospect’s behavior, even across different devices and sessions.

Enrichment (Adding Context): Basic data is transformed into meaningful insights. An IP address, for example, can reveal a company’s name, industry, size, and revenue. The system also identifies whether the visitor fits your Ideal Customer Profile (ICP) and assigns attributes based on their behavior. For instance, if an IP address belongs to "ACME Corp", a 500-employee manufacturing firm in Ohio, this information becomes immediately actionable for qualification and routing.

Output (Actionable Signals): The final stage delivers processed insights directly to your team. Instead of leaving raw data in a database, the API sends real-time notifications - like a Slack alert when a high-ICP lead views your demo page. It also updates CRM records with engagement scores and triggers automated workflows based on behavioral thresholds. This ensures decision-makers receive the information they need instantly.

Stage | Technical Action | Practical Example |

|---|---|---|

Input | Data Ingestion | Logging a "page_view" event via JavaScript SDK |

Matching | Identity Resolution | Linking an anonymous visitor to a known profile |

Enrichment | Contextual Data Addition | Identifying "ACME Corp" using an IP address |

Output | Delivering Actionable Signals | Sending a Slack alert for a high-ICP lead |

These stages ensure data is enriched, validated, and delivered efficiently, all within a framework designed for speed and reliability.

Scalability and Real-Time Processing

API layers are built to grow with your business. Unlike custom pipelines that require constant re-engineering as data volumes rise, API platforms use secured, load-balanced, and geo-distributed environments to scale automatically. Whether you're processing 50,000 or 10 million events monthly, performance remains consistent without manual adjustments.

Real-time processing ensures insights are delivered quickly. While the API can return basic lead details in around 50 milliseconds, completing a full profile takes only 2-3 seconds. This slight delay balances speed with data consistency, enabling sales teams to act while prospects are still engaged. Timely, enriched outreach can drive reply rates between 25% and 40%.

For companies operating globally, the API layer ensures compliance with local regulations like GDPR by maintaining data residency. As your needs evolve, the system adapts seamlessly. New data sources or routing destinations can be integrated through simple configurations, ensuring your infrastructure scales alongside your strategy. This adaptability guarantees consistent, real-time insights, no matter how complex your lead generation becomes.

Key Features of Event Data APIs

The right API features can make all the difference in turning raw data into actionable insights for lead generation. Three standout capabilities - real-time synchronization across multiple sources, automated lead scoring, and seamless CRM integration - play a critical role in helping sales teams act swiftly on buyer intent.

Real-Time Data Synchronization and Multi-Source Integration

Event data APIs gather information from various sources, such as website analytics, email platforms, web forms, landing pages, and live chat systems, and consolidate it into unified user profiles. For instance, when a prospect clicks a link in an email, fills out a form, or visits your pricing page, these actions are captured and processed instantly - eliminating the need for manual dashboard reviews.

Bidirectional webhooks ensure data flows seamlessly across platforms. For example, when a contact form is submitted, the API updates your CRM and marketing tools in real time, keeping records consistent and preventing duplicates. Many tools also enrich these profiles by leveraging public data and algorithms to provide deeper insights.

By integrating multiple sources, the API captures signals that single-channel tracking might miss. Imagine a visitor starts out browsing your website anonymously, later engages through email, and finally identifies themselves in a live chat. The API stitches these interactions together, creating a comprehensive view of the prospect's journey. This integrated approach not only enhances personalization but also sets the stage for automated lead scoring, speeding up the qualification process.

Automated Lead Scoring and Qualification

Automated lead scoring uses behavioral data, firmographic details, and technographic insights to rank leads based on their engagement. This allows your system to prioritize prospects who show the most interest. For example, a lead from a large company who repeatedly visits your demo page would score higher than someone with minimal interaction.

Many systems now use AI to refine this process, tapping into extensive contact and company databases. This shift focuses on identifying sales-qualified leads (SQLs) rather than just marketing-qualified leads (MQLs), saving time and effort. As prospects continue to engage - whether by revisiting your pricing page or downloading a case study - their scores are updated in real time, ensuring your sales team always targets the most engaged leads.

CRM and Marketing Tool Integration

Seamless integration with CRM and marketing platforms is another essential feature of modern event data APIs. Native CRM connections ensure data consistency across your tools. Bidirectional synchronization means updates in your CRM are reflected in the API and vice versa. For instance, when a lead's status changes to "Opportunity" in your CRM, it can automatically trigger marketing campaigns or internal alerts.

This integration removes the need for manual data entry, reducing errors and saving time. When a lead reaches a specific engagement level, the API can create tasks for sales reps, update custom fields, and send instant notifications through tools like Slack. Automated workflows based on behavioral triggers can further enrich lead profiles, update scores, sync data, and notify the right team members. This ensures raw event data is transformed into actionable sales activities, streamlining the entire process.

Implementing and Optimizing Event Data APIs

Planning and Integration Setup

Start by securing API keys for both development and production environments. Use dedicated "dev" endpoints during testing to protect your production data. Decide how you’ll integrate: client-side tracking (via GET requests) works well for lightweight events like page views, while server-side tracking (via POST requests) is better for handling complex payloads, batch processing, or sensitive data.

Identify the key events you need to track - like "AppointmentCreated" - and determine the required parameters (e.g., visitor ID, session ID) and match keys (such as hashed emails or phone numbers). For real-time workflows, register callback URLs so your system can receive event updates automatically instead of relying on constant polling. To ensure security, hash all personally identifiable information (PII) using SHA-256 before sending it.

Consider setting up dual-channel tracking by pairing traditional web pixels with the Events API. This approach reduces data gaps and improves reliability. Design your integration events as pure data markers - free of execution logic - so they can be independently used by tools like CRM systems, marketing automation platforms, and analytics software. Before development begins, complete internal legal reviews to ensure your data usage complies with policies and regulations.

Once your integration is in place, the focus shifts to performance monitoring and evaluating the return on investment (ROI).

Performance Tracking and ROI Measurement

After implementing your API, it’s crucial to monitor its performance to validate its value and operational efficiency. Use diagnostic tools to confirm that events are being received correctly. Pay close attention to status codes: a 200 indicates success, 400 points to a bad request (often due to invalid data formats), and 403 signals forbidden access, which can occur if an API key is invalid or expired.

Track metrics like lead conversion rates, revenue impact, and workflow efficiency to gauge the API's business impact. Ensure server events are properly received, deduplicated, and matched to user identities to improve ad targeting. Building a custom data pipeline can take years of engineering effort, but using an API layer eliminates the need for a dedicated backend or data engineering team.

Continuous Optimization and Maintenance

Maintaining high API responsiveness requires ongoing optimization based on performance tracking insights. Use multi-level caching (e.g., HTTP headers, Redis/Memcached, CDN) and optimize database indexing to boost performance - these changes can improve API speed by up to 70%. Additionally, compressing payloads can result in a 60% performance gain.

"API performance is not a feature; it's the foundation of user satisfaction and business scalability." - JIN, Shift Asia

Set up dead-letter queues to capture and debug unprocessed messages, ensuring that no data is permanently lost due to schema errors or system issues. For long-running operations, implement asynchronous processing with message queues like RabbitMQ or Kafka, which can increase system throughput by as much as 50% in high-traffic scenarios. Proactively monitor for latency and error thresholds, with target response times under 200 ms on average and below 500 ms at the 95th percentile. Aim for error rates of less than 1% for HTTP 4xx and below 0.1% for HTTP 5xx.

Audit logs regularly to remove unnecessary debugging entries, as excessive logging can inflate API call counts and costs. Monitor schema changes closely to ensure updates to event schemas don’t disrupt downstream systems. Consolidate multiple database operations into single transactions to minimize the number of billable API calls.

Security and Compliance Considerations

Data Encryption and Access Control

Safeguarding sensitive event data requires multiple layers of security. With a growing number of API-related breaches, encryption and strict access control have become non-negotiable.

Secure data in transit using TLS 1.3 and data at rest with AES-256 encryption. A centralized API gateway can help enforce policies like rate limiting, TLS termination, and web application firewalls (WAF). For authentication, rely on OAuth 2.0 or OIDC, and use JWT for internal communications, while opting for opaque tokens for external use.

Follow the principle of Least Privilege by implementing RBAC (Role-Based Access Control) or ABAC (Attribute-Based Access Control), minimizing damage in case of credential compromise. Additionally, mask sensitive fields such as credit card numbers to display only partial data (e.g., the last four digits).

A notable example of the risks involved occurred in December 2024, when Chinese state-backed hackers exploited a compromised API key from BeyondTrust to breach the U.S. Department of the Treasury. The attackers gained access to government workstations and reset passwords. This incident highlights the danger, as 61% of organizations reportedly expose sensitive secrets like API keys in repositories, both public and private. To mitigate such vulnerabilities, use short-lived access tokens combined with refresh tokens and regularly rotate API keys.

"If you don't have layers of security measures in place, you're not going to be at the table very long. We need to be ahead of the curve."

Matthew Mazzariello, Development Manager, Artisan

While technical measures are vital, they must be paired with compliance strategies to ensure comprehensive protection.

Regulatory Compliance and Consent Management

Strong security measures alone aren’t enough - regulatory compliance is equally crucial. Integrating compliance protocols into your API architecture helps meet regulations like GDPR and CCPA. Non-compliance with GDPR can lead to fines of up to €20 million or 4% of global annual turnover, while CCPA violations can result in civil penalties of up to $7,500 per incident.

To stay compliant, tag events with user IDs to simplify access, deletion, and portability requests. Automate processes to handle consumer requests within required timeframes (e.g., 45 days under CCPA) and develop infrastructure that supports "Right to Opt Out" requests.

Maintain detailed, timestamped audit logs for all interactions involving personal data. This not only assists in regulatory audits but also ensures transparency. If you use consent logic for browser-based tracking (like Pixels), extend the same logic to server-side event data APIs for consistency. For U.S.-based users, include options like "Limited Data Use" (LDU) flags in API payloads to comply with state-specific privacy laws such as CCPA.

Follow GDPR's data minimization principle by collecting and processing only the data necessary for legitimate purposes. Reduce potential breach risks by regularly pseudonymizing or retiring event data after a set period. Manage deletion requests through a dedicated user interface or API, ensuring all actions are logged for accountability.

Emerging Trends in Event Data Intelligence

AI-Driven Lead Scoring and Predictive Analytics

AI is reshaping lead scoring by analyzing hundreds of data points at once, including real-time behaviors and historical conversion trends. This approach moves beyond traditional, static rules like assigning points for actions such as opening an email or requesting a demo. Instead, AI continuously learns and adapts, retraining itself every few days based on win/loss data.

The results? Sales teams using AI report a 50% jump in leads and appointments. Moreover, 98% of sales teams agree that AI dramatically improves their ability to prioritize leads. Workforce Software, for instance, saw a 121% increase in engagement with in-market accounts over six months by using AI-driven account-based marketing tailored to buyers' educational journeys. AI also speeds up lead response times, cutting them by 31%. And here's the kicker: companies that respond to leads within an hour are seven times more likely to have meaningful conversations with decision-makers.

Modern AI systems take this a step further by focusing on Marketing Qualified Accounts (MQAs) instead of individual leads. They combine signals from multiple stakeholders within an organization to detect buying intent. AI also employs negative scoring to identify and filter out low-value prospects - those who are likely to churn or never convert.

"These account insights are invaluable – knowing what our clients are looking for and being able to proactively tailor and personalize their experience is a true win-win."

Karen Feldman, CMO, IBM Consulting

With these refined scoring methods, event data APIs are now driving faster, smarter follow-ups.

Automated Follow-Up Sequencing

Advanced APIs are transforming follow-up strategies by automating outreach at the perfect moment - right when prospects are most engaged. Whether it's after a visit to a pricing page, a demo download, or hitting a key engagement score, these workflows take over, removing the need for manual follow-ups - tasks that can eat up to four hours daily for Sales Development Representatives.

This real-time approach pays off. Triggered follow-ups can boost conversion rates by up to 3x. High-value prospects are routed directly to senior representatives, ensuring no lead gets stuck in a queue. Tools like webhooks even send instant Slack or CRM alerts when target accounts make changes, such as updating their tech stack, giving sales teams timely opportunities to engage.

The move toward server-side event tracking adds another layer of efficiency. Backend APIs can now feed CRM or billing signals directly into automation workflows, enabling more nuanced nurturing triggers. This unified system ensures that any actions taken in the CRM are seamlessly reflected in marketing automation sequences, avoiding overlap and keeping everything in sync.

Conclusion

Transforming raw event data into meaningful actions through a single API layer is reshaping how B2B operations function. The results are hard to ignore: what once took months to implement can now be completed in just days. This shift allows businesses to concentrate on revenue-driving activities instead of getting bogged down by building custom pipelines.

Switching from polling mechanisms to event-driven webhooks introduces near-real-time responsiveness, with delays reduced to mere seconds or up to a minute. This speed gives sales teams the ability to act on leads faster and improves overall B2B lead strategy and marketing alignment.

"The API layer is a critical component of modern data platforms, providing a standardized, secure, and scalable interface for integrating applications, while accommodating unique organizational needs."

Alaeddin Khader, Data Leader

The architecture behind this approach - decoupled, asynchronous, and resilient - ensures that even if one service encounters issues, events are safely buffered and processed later. This prevents the loss of valuable lead data. Such fault tolerance and event persistence create a strong and dependable foundation for growth.

If you're still relying on manual event data processing or scheduled checks that push your system to its limits, you could be missing out on major efficiency improvements. The real question is: how quickly can you implement an event data API to start converting every website visit, form submission, and engagement into actionable revenue opportunities? By adopting this streamlined API strategy, businesses can tap into immediate revenue potential and maintain their competitive edge.

FAQs

How can a single API layer help speed up lead response times?

A single API layer speeds up lead response times by automating essential tasks such as data collection, lead routing, and notifications. This automation ensures leads are quickly identified and assigned to the appropriate team members, allowing for nearly instant follow-ups.

Why does this matter? Because responding to leads within the first few minutes dramatically boosts the likelihood of turning them into customers. By simplifying these processes, businesses can seize opportunities faster and optimize their sales performance.

What are the advantages of automating lead scoring and qualification?

Automating lead scoring and qualification offers businesses some solid benefits. For starters, it enables real-time prioritization by analyzing visitor behavior and other data points to assign scores automatically. This means sales teams can focus their energy on the most promising leads, making their efforts more efficient and increasing the chances of turning prospects into customers.

Another advantage is that automation removes the need for manual input and reduces human bias. By sticking to predefined criteria - like engagement levels, company details, or specific actions - it ensures a consistent and fair evaluation of leads. Plus, automation speeds up the sales process by sending instant notifications about high-scoring prospects, allowing teams to follow up right away and improve their chances of closing deals.

In short, automating lead qualification streamlines workflows, enhances data reliability, and helps teams make smarter decisions. It bridges the gap between marketing and sales, setting businesses up for better outcomes.

How does real-time data syncing improve CRM integration?

Real-time data syncing transforms how CRM systems handle information by keeping lead and visitor data constantly updated and ready for use. This means sales and marketing teams can respond quickly to actions like website visits or form submissions without any lag. For instance, anonymous visitors can be instantly converted into actionable leads as data is automatically gathered, analyzed, and synced into the CRM.

With these updates happening in real time, critical details like lead scores, company profiles, and engagement activities remain up-to-date. This allows teams to focus on high-priority opportunities and make faster, more informed decisions. By ensuring everyone has access to the most accurate data, real-time syncing minimizes missed chances and boosts overall efficiency in managing customer relationships.

Related Blog Posts

Supercharge your marketing results with LeadBoxer!

Analyze campaigns and traffic, segement by industry, drilldown on company size and filter by location. See your Top pages, top accounts, and many other metrics.

LeadBoxer

Get started